Hshmat Sahak

I am a final-year MASc student at the University of Toronto (UofT), co-advised by Tim Barfoot and Nick Rhinehart. My MASc thesis revolves around perception and planning in indoor environments. I completed my BASc in Engineering Science at UofT, with a major in Machine Intelligence and a minor in Robotics & Mechatronics. My BASc thesis was people detection in cluttered indoor environments.

I am fortunate to have worked at great companies and research labs. I have previously worked at the Vector Institute for AI (advised by Prof. Jimmy Ba), Google Brain (Student Researcher, advised by Prof. David Fleet), Tesla (Software Automation Engineer Intern) and Nvidia (Deep Learning Intern). At UofT, I have completed research internships at the Data-Driven Decision-Making Lab (advised by Prof. Scott Sanner) and the Dynamic Systems Lab (advised by Prof. Angela Schoellig).

My plan is to pursue a PhD within computer vision for better robot perception and/or planning algorithms for mobile robots.

My specific research interests include:

- Robot Perception - 3D Object Detection and Semantic Segmentation for Scene Understanding

- Autonomous Path Planning for Indoor and Outdoor Navigation of Robots

- Developing efficient learning algorithms for function optimization in Deep Neural Networks

- Generative AI for Image-to-Image and Discriminative tasks- e.g., Diffusion Models, GANs, NeRF Models

- Broadly interested in Reinforcement Learning and NLP

- Broadly interested in Computer Networks and Distributed Systems

If you are a current Graduate student in Machine Learning, Robotics, or at the intersection, I would love to hear about your work! If you are an incoming Engineering Science student, feel free to connect to ask questions about the program. You can reach me at hshmat.sahak@mail.utoronto.ca

Education

University of Toronto

MASc. in Aerospace Science and Engineering | Sept 2024 - Aug 2026

Collaborative Specialization in Robotics

Advisors: Tim Barfoot, Nick Rhinehart

Thesis: Planning and navigation for indoor mobile robots

GPA: 4.00/4.00

Relevant Courses: State Estimation for Aerospace Vehicles, Imitation Learning for Robotics, AI Applications in Robotics, Mobile Robotics and Perception

University of Toronto

BASc. in Engineering Science | Sept 2019 - Apr 2024

Machine Learning Major, Robotics and Mechatronics Minor

GPA: 3.99/4.00

Relevant Courses: Machine Learning, Robot Modelling and Control, Artificial Intelligence, Control Theory,

Systems Software, Computing, Probabilistic Reasoning, Distributed Systems

Work Experience

Applied ML Intern

Cerebras Systems Inc.

May 2024 - Aug 2024 · Toronto, ON, CA

Expanded MathVista and MMMU datasets by creating augmented versions with similar samples, improving model performance

in multimodal math problem solving and visual reasoning. Compared retrieval methods using CLIP, DINO, and I-JEPA

embedding spaces, leading to higher scores across benchmarks including GQA, MathVista, MMMU, and VQA. Developed

long-context datasets using clustering techniques to optimize model performance on extended sequence length tasks.

Software Automation Engineer Intern

Tesla

May 2023 - Aug 2023 · Fremont, CA, USA

Worked on Root Cause Analysis in the Cell Manufacturing Pipeline, and real-time Monitoring of Systems. Gained

familiarity with Prometheus, Grafana, InfluxDB, GoLang, React, Docker and Kubernetes. Applied ML and statistical

techniques to anomaly detection and time series forecasting.

Research Intern

Vector Institute

Jan 2023 - May 2023 · Toronto, ON, CA

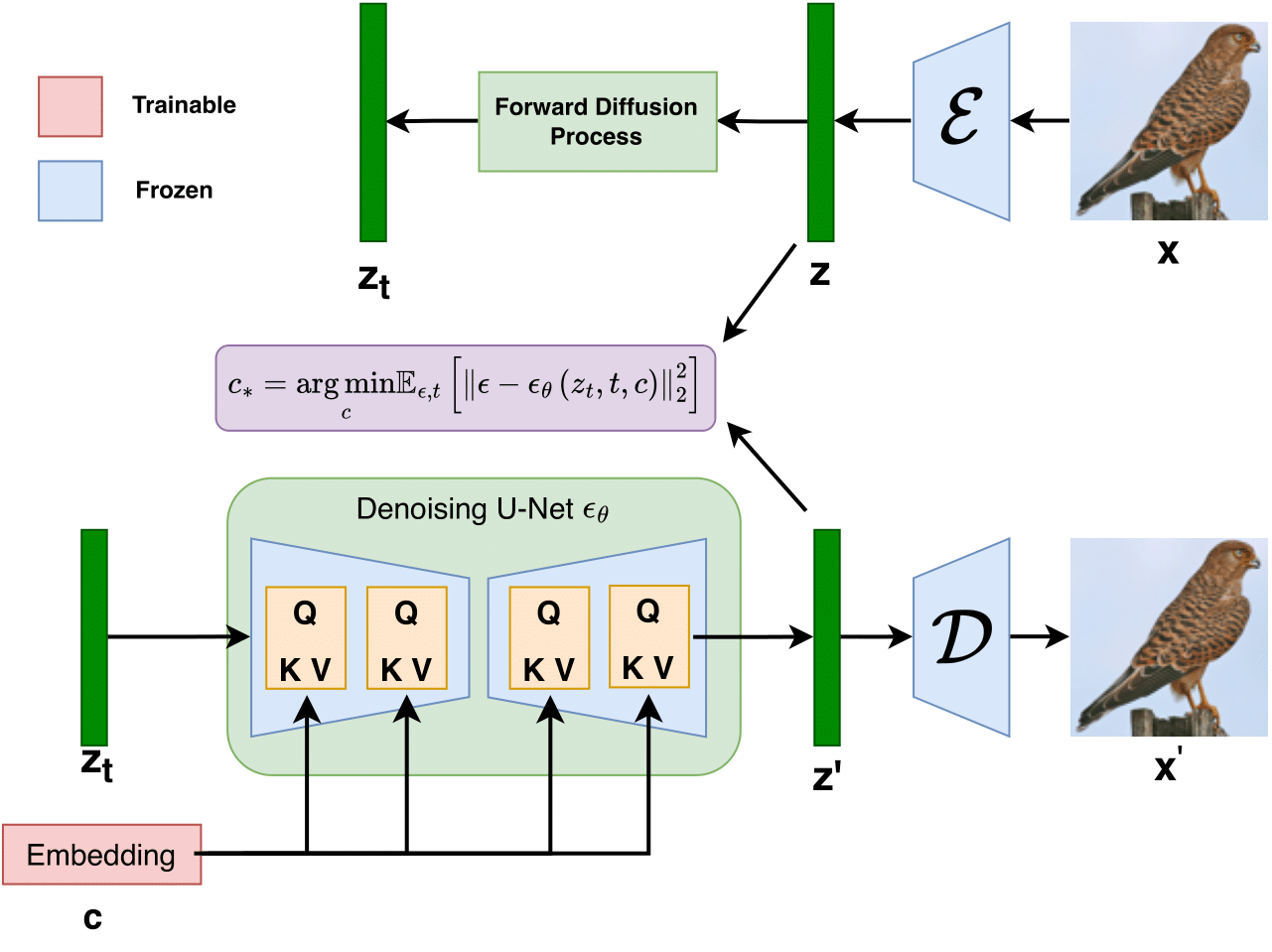

Worked on using Generative AI, specifically Diffusion Models, to improve performance of discriminative models by training on

real and synthetic images, instead of just the original dataset. Identified 3 key requirements in order for diffusion-based

foundation models to outperform original datasets in downstream classification.

Student Researcher

Google Brain

May 2022 - Dec 2022 · Toronto, ON, CA

Implemented Denoising Diffusion Probabilistic Models for Robust Super-Resolution (SR) in the wild. My work resulted in a patented

publication. The proposed model, SR3+, is an improvement over SR3 and can be used for photorealistic 4x SR even when

trained on out-of-distribution datasets, and can be cascaded for 16x SR.

Research Intern

University of Toronto, Division of Cardiology

Sep 2021 - Apr 2022 · Toronto, ON, CA · Part-Time

I was the Lead Data Scientist in the Toronto Cardiology Team. I worked alongside a team of doctors; we co-authored 2 papers-

both submitted to The 2022 Canadian Cardiovascular Congress. The project was to determine how patient-reported

breathlessness correlates with several objective measures of respiration. I analyzed data collected from exercise

stress tests and produced visualizations using Python, MATLAB and Jupyter Notebook.

Deep Learning for Power Architecture Intern

Nvidia

May 2021 - Aug 2021 · Santa Clara, CA, USA

Worked on project using state-of-the-art machine learning techniques to enhance dynamic boost feature for next generation of

Nvidia's GPU. Implemented algorithm to improve battery-mode dynamic compute power predictions by considering GPU idle time.

Research Intern

University of Toronto, Data-Driven Decision-Making Lab

May 2021 - Aug 2021 · Santa Clara, CA, USA · Part-Time

Investigated whether Twitter data was an appropriate indicator of the spread of Covid-19 across Canada and USA. I fine-tuned a

BERT model (ML model for NLP) using PyTorch to detect mask sentiments, resulting in a 41% improvement in mask attitude classification

compared to vanilla VADER sentiment analysis, outperforming existing hashtag and regex-based classifiers

Research Intern

University of Toronto, Dynamic Systems Lab

May 2020 - Aug 2020 · Santa Clara, CA, USA · Part-Time

Designed and implement a scalable, real time trajectory generation algorithm using MATLAB to synchronize the flight of 50 drones

with live music from a MIDI keyboard. I also surveyed 20+ safe learning research papers and categorized them by key ML concepts

to aid in the creation of a safe learning survey paper.

Software Intern

Sunnybrook Research Institute

May 2020 - Aug 2020 · Santa Clara, CA, USA · Part-Time

Part of the Focused Ultrasound High School Summer Research Program. I implemented an algorithm using Fast

Fourier transform to identify distribution of harmonics present in ultrasound-induced motion signals. I stored

lesion observations in a patient-to-observation database using SQL. I visualized lesion observations as a pseudo-colour,

allowing for customizing colour pixel and bit representation using MATLAB.

Publications

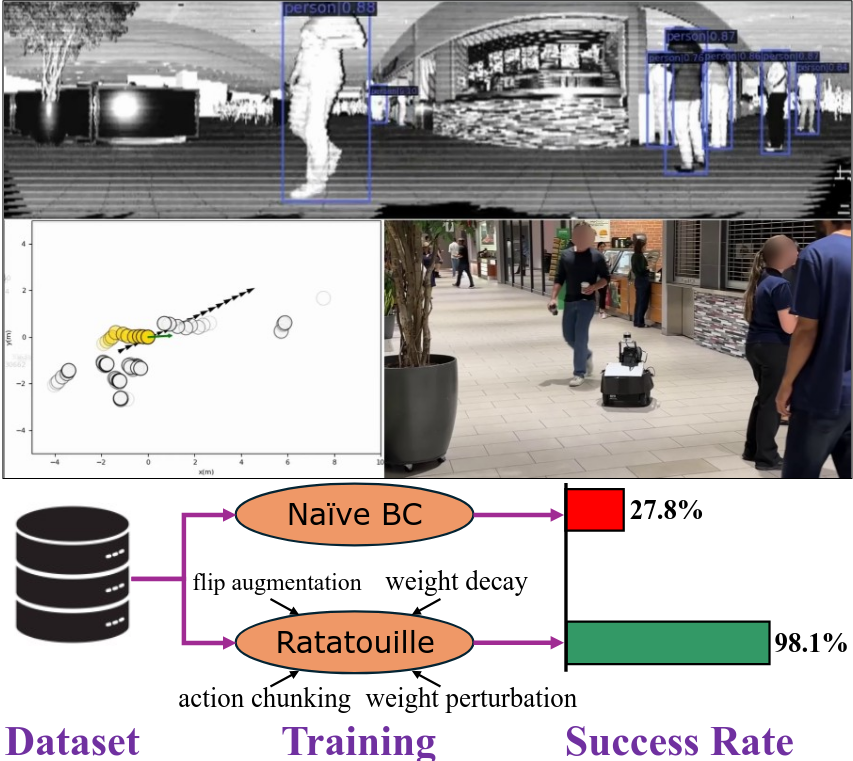

Ratatouille: Imitation Learning Ingredients for Real-world Social Robot Navigation

James R. Han, Mithun Vanniasinghe, Hshmat Sahak, Nicholas Rhinehart, Timothy D. Barfoot

Paper / Video

We present Ratatouille, a behavior cloning pipeline for social robot navigation that integrates weight perturbations, data augmentation,

hybrid action spaces, action chunking, and neural network optimization techniques. Without changing the data, Ratatouille reduces

collisions per meter by 6× and improves success rate by 3× compared to naïve behavior cloning. We validate our approach in both

simulation and the real world, collecting over 11 hours of data on a dense university campus and demonstrating qualitative results

in a public food court.

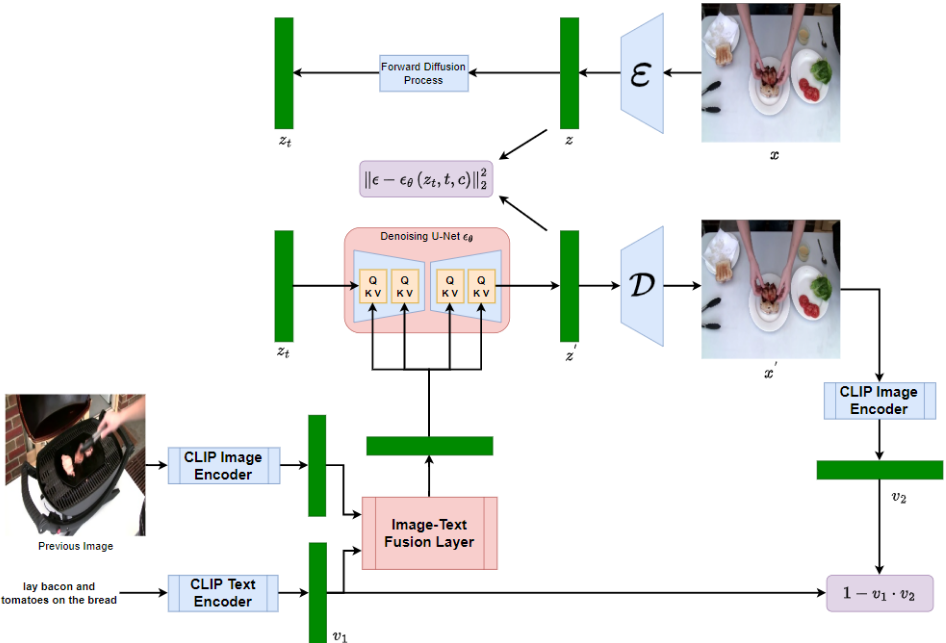

RecipeVis: Fusing Vision and Language Models to Generate Sequence of Recipe Images from Steps

Hshmat Sahak

Paper

We present RecipeVis, which generates an image for each step in a recipe by conditioning on the previously generated image and current step.

RecipeVis leverages the pretrained text-to-image Stable Diffusion model, as well as text and visual encoders that produce task-agnostic

embeddings. It uses an attention module to fuse text and image embeddings, and adds a cycle consistency loss to the standard diffusion

loss to ensure consistency between output modes. Our empirical study demonstrates that vision-language models incorporating previous

images provide superior results over baseline text-to-image models.

Training on Thin Air: Improve Image Classification with Generated Data

Yongchao Zhou, Hshmat Sahak, Jimmy Ba

Project Page / Paper / Code / Slides

We present Diffusion Inversion, a simple yet effective method that leverages the pre-trained generative model, Stable Diffusion,

to generate diverse, high-quality training data for image classification. Our approach captures the original data distribution

and ensures data coverage by inverting images to the latent space of Stable Diffusion, and generates diverse novel training images

by conditioning the generative model on noisy versions of these vectors.

Denoising Diffusion Probabilistic Models for Robust Image Super-Resolution in the Wild

Hshmat Sahak, Daniel Watson, Chitwan Saharia, David Fleet

Paper

This paper introduces SR3+, a diffusion-based model for blind super-resolution, establishing a new state-of-the-art. We advocate

self-supervised training with a combination of composite, parameterized degradations for self-supervised training, and noise-conditioing

augmentation during training and testing.

Projects

Enhancing Diffusion Models for Robust and Efficient Robot Motion Planning

[Final Project] @ University of Toronto, CSC2626: Imitation Learning for Robotics

Paper / Slides

We extend the "Planning with Diffusion for Flexible Behavior Synthesis" framework for trajectory planning in goal-conditioned reinforcement learning.

Our key contributions include middle waypoint conditioning, multi-agent trajectory planning, and dynamic obstacle avoidance, enabling the generation

of globally coherent, context-aware trajectories that adapt to complex environments. Experiments on the Maze2D task demonstrate significant improvements

in trajectory feasibility, safety, and planning efficiency, with enhanced multi-agent coordination in dynamic environments.

Diffusion Inversion: Steering Pre-Trained Diffusion Models to Improve Image Classification on Rare Datasets

[Research Project] @ Vector Institute, with Yongchao Zhou and Jimmy Ba.

We propose Diffusion Inversion, a simple yet effective method that utilizes pre-trained generative

models to assist with discriminative learning, bridging the gap between real and synthetic data.

Our method offers 2-3x sample complexity improvements and 6.5x reduction in sampling time.

Blue Guardian: Protect Before they Connect

[Research Project] @ Blue Guardian

Blue Guardian is a startup that aims to revolutionize Youth Mental Health Detection through Sentiment Analysis of text messages.

I worked on implementing state-of-the-art NLP methods for emotion detection, including feature extraction and transformer architecture

implementation. I also tackled problems of limited and imbalanced datasets resulting from uncommon emotions. Our model has been deployed

and can be accessed through the app or website.

SR3+: Using DDPMs for Robust Super-Resolution in the Wild

[Research Project] @ Google Brain, advised by David Fleet.

We introduce SR3+, a diffusion model for blind image super-resolution, outperforming SR3 and the previous

SOTA on zero-shot RealSR and DRealSR benchmarks, across different model and training set sizes. Through a

careful ablation study, we demonstrate the complementary benefits of parametric degradations and

noise conditioning augmentation techniques (with the latter also used at test time).

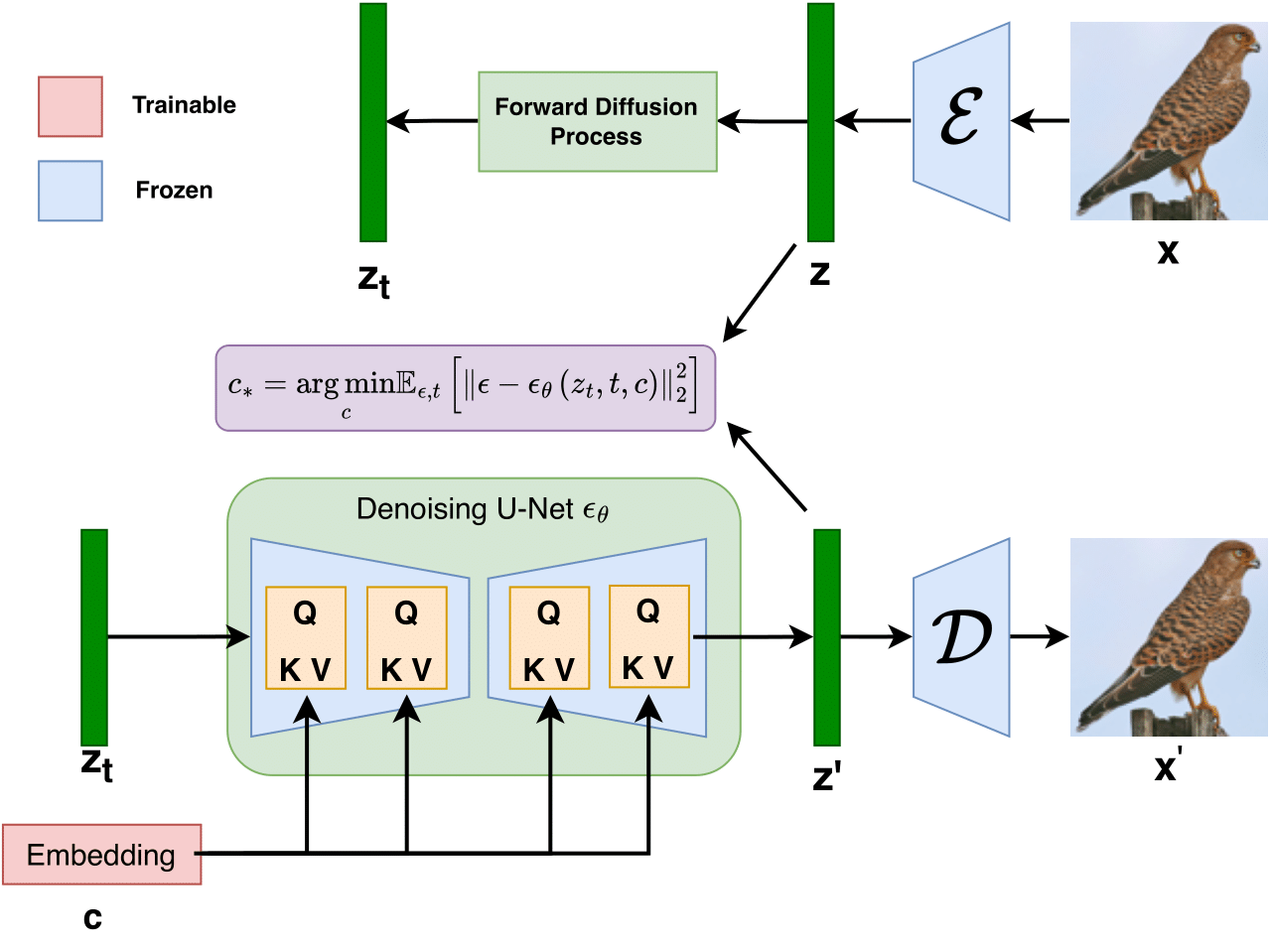

VGH-Net: Predict Restaurant Ratings from Food Images using CNN

[Final Project] @ University of Toronto, ECE324: Machine Learning, Software, and Neural Networks

Final Report / Code

We present VGH-Net; a novel architecture for predicting restaurant ratings from a set of food images. VGH-Net training occurs

in two stages. First, a CNN learns to predict image embeddings matching the output of BERT on a restaurant's text reviews.

Then, it attaches a feed-forward neural network to predict the review-specific restaurant rating from the embedding.

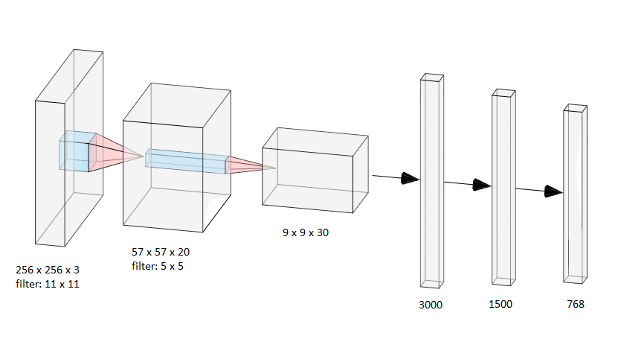

Trash n' Track : Addressing Waste Management in Ghana

[Final Project] @ University of Toronto, ESC204: Praxis III

Final Report / Slides

Trash n' Track is a modular system that can be attached to existing waste bins to let waste collectors know of the location of full bins. It

uses an ultrasonic sensor to determine the available capacity of the waste bin. This information, along with the location of the waste

bin determined from an onboard GPS module, is sent to a server using a LoRa transceiver. We have also created a webapp that connects to

this backend in order to provide live updates of waste bin fullness and location to waste collectors.

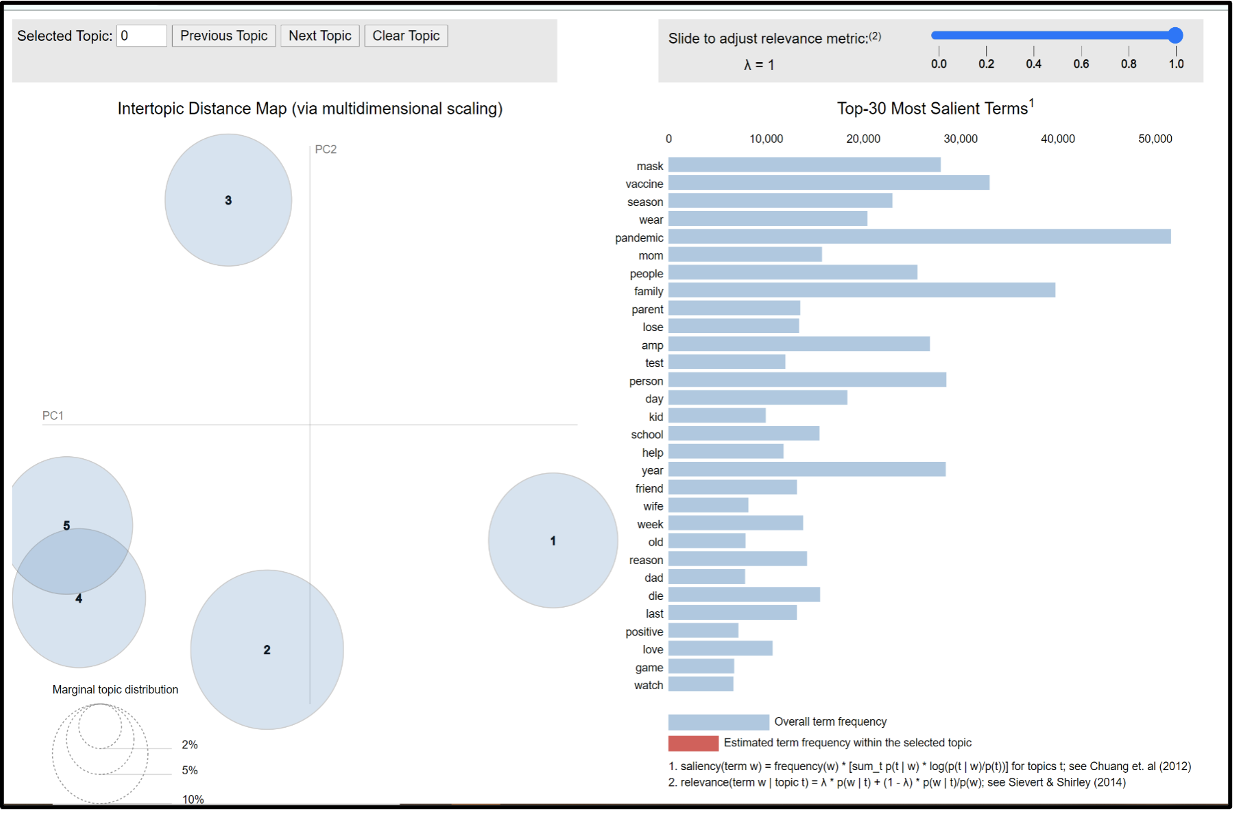

Using Twitter to detect the Spread of Covid-19

[Research Project] @ University of Toronto, Data-Driven Decision-Making Lab

Code / Slides

Compared COVID statistics in various regions with corresponding COVID-related tweet counts and mask/vaccine hesitancy scores. Used Folium library,

word clouds and topic modelling to identify spatial & temporal tweet distribution. For more accurate labelling, fine-tuned BERT model using PyTorch

to detect mask sentiments, improving mask attitude classification by 41% compared to vanilla VADER sentiment analysis and outperforming existing hashtag & regex-based classifiers

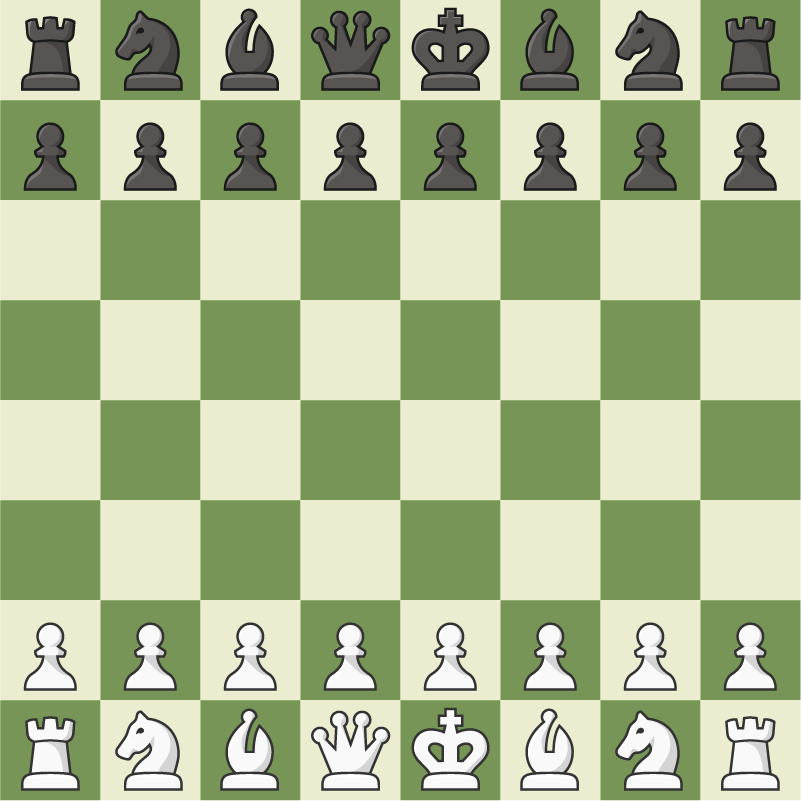

Chess AI

[Side Project], with Ritvik Singh

Code

Developed chess engine using Python chess module. Beta release used alpha-beta pruning on a minimax tree. Final version

uses multiple advanced algorithms, including Killer Move Heuristic, History Heuristic, Principle Variation Search, Transposition Table,

Zobrist Hashing, Quiescent Search, and Iterative Deepening. The AI is around 1500 ELO as measured by chess.com.

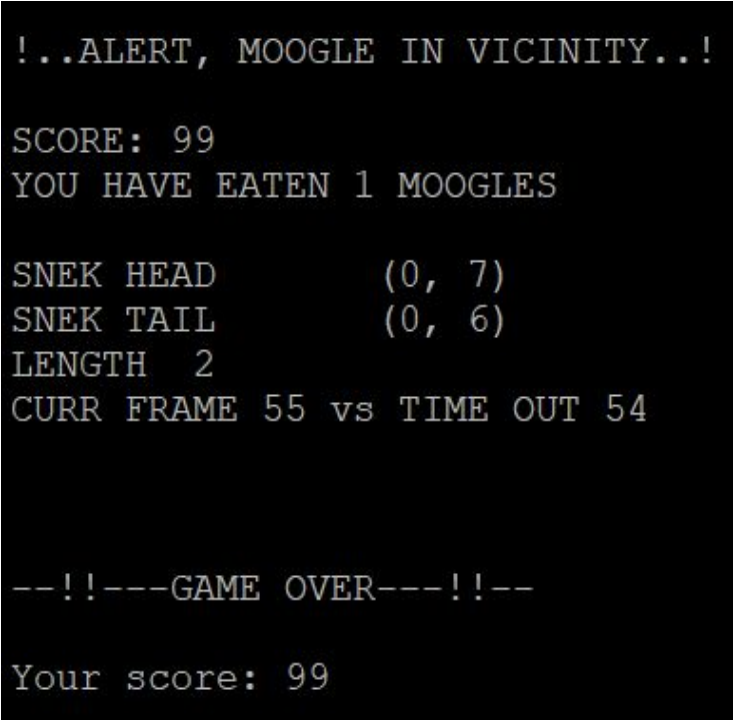

Snake Game

[Final Project] @ University of Toronto, ESC190: Data Structures and Algorithms

Final Report / Code

Developed an AI agent to play the snake game. The agent starts at the top left and aims to consume all food while avoiding

hitting the walls and itself as it grows. For small board sizes, used Randomized Path Finding Algorithm. For larger board sizes,

used DFS on the game state stack to find the target. It is flexible across varying board sizes, while keeping O(n)

space complexity on an nxn board.

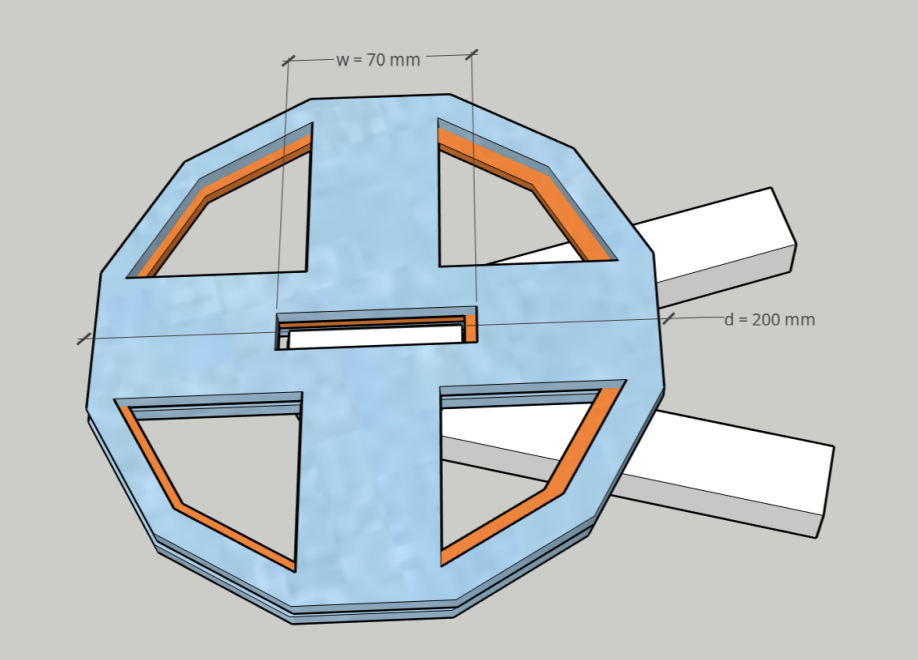

FRONT: Improved Can Openers at Kohai Life

[Final Project] @ University of Toronto, ESC102: Praxis II, with Mingde Yin, Aoran Jiao, Ryan Ghosh

Report / Demo

FRONT: Force Redirection Object Negotiator Tool, a retrofit extension designed to

be attached to the actuator bar of the handle of a can opener to allow opening of cans with less directed

work and make it easier for people of differing abilities to use in daily life. FRONT is a retrofit module

that aims to convert a simpler pulling force in any direction to the traditional rotary force needed to cut a

can. The nature of the design is intuitive; to cut the can one simply attaches the module and pulls on a

string attached to a rotary guide wheel, guaranteeing that there is some component of force in the

direction of undisturbed motion(useful work)

Robot Pet

[Side Project], with Gabriel Luo

Code / Demo

Built and programmed a robotic pet that can pick up and sort objects, respond to stimuli, draw, and play the piano.

Worked with Arduino microcontroller; using Bluetooth to control the arm and various sensors for obstacle detection, path planning

and environmental detection.